Blurhash

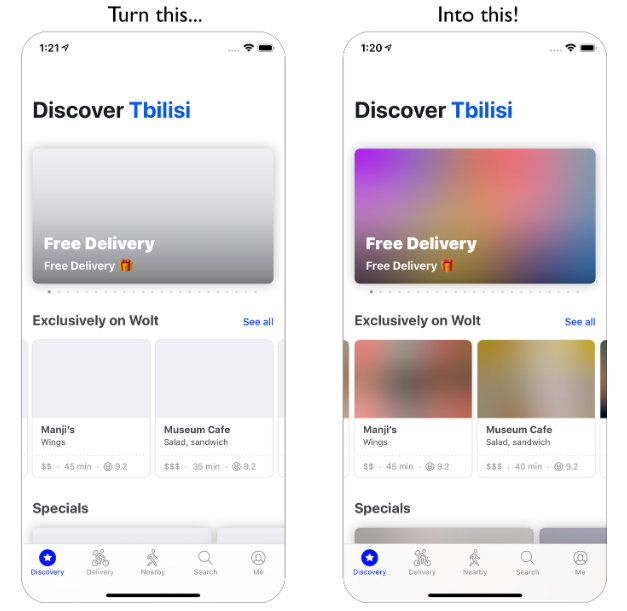

BlurHash is a compact representation of a placeholder for an image. Instead of displaying boring grey little boxes while your image loads, show a blurred preview until the full image has been loaded.

The algorithm was created by woltapp/blurhash, which also includes an algorithm explanation.

Example Workflow

This is how I use it in my project: |

|

Usage

The decoders are written in Swift and Kotlin and are copied from the official woltapp/blurhash repository (MIT license). I use light in-memory-caching techniques to only re-render the (quite expensive) Blurhash image creation when one of the blurhash specific props (blurhash, decodeWidth, decodeHeight or decodePunch) has changed.

| Name | Type | Explanation | Required | Default Value |

|---|---|---|---|---|

blurhash |

string |

The blurhash string to use. Example: LGFFaXYk^6#M@-5c,1J5@[or[Q6. |

✅ | undefined |

decodeWidth |

number |

The width (resolution) to decode to. Higher values decrease performance, use 16 for large lists, otherwise you can increase it to 32.

See: performance |

❌ | 32 |

decodeHeight |

number |

The height (resolution) to decode to. Higher values decrease performance, use 16 for large lists, otherwise you can increase it to 32.

See: performance |

❌ | 32 |

decodePunch |

number |

Adjusts the contrast of the output image. Tweak it if you want a different look for your placeholders. | ❌ | 1.0 |

decodeAsync |

boolean |

Asynchronously decode the Blurhash on a background Thread instead of the UI-Thread.

See: Asynchronous Decoding |

❌ | false |

resizeMode |

'cover' | 'contain' | 'stretch' | 'center' |

Sets the resize mode of the image. (no, 'repeat' is not supported.)

See: Image::resizeMode |

❌ | 'cover' |

All View props |

ViewProps |

All properties from the React Native View. Use style.width and style.height for display-sizes. |

❌ | {} |

Read the algorithm description for more details

Example Usage:

import { Blurhash } from 'react-native-blurhash';

export default function App() {

return (

<Blurhash

blurhash="LGFFaXYk^6#M@-5c,1J5@[or[Q6."

style={{flex: 1}}

/>

);

}

See the example App for a full code example.

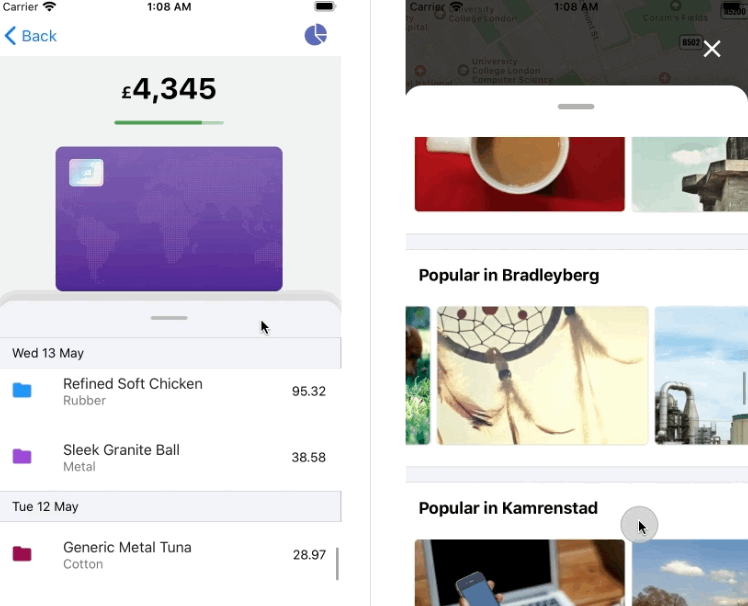

| iOS Screenshot | Android Screenshot |

|---|---|

|

|

To run the example App, execute the following commands:

cd react-native-blurhash/example/

yarn

cd ios; pod install; cd ..

npm run ios

npm run android

Encoding

This library also includes a native Image encoder, so you can encode Images to blurhashes straight out of your React Native App!

const blurhash = await Blurhash.encode('https://blurha.sh/assets/images/img2.jpg', 4, 3);

Because encoding an Image is a pretty heavy task, this function is non-blocking and runs on a separate background Thread.

Performance

The performance of the decoders is really fast, which means you should be able to use them in collections quite easily. By increasing the decodeWidth and decodeHeight props, the performance decreases. I'd recommend values of 16 for large lists, and 32 otherwise. Play around with the values but keep in mind that you probably won't see a difference when increasing it to anything above 32.

Benchmarks

All times are measured in milliseconds and represent exactly the minimum time it took to decode the image and render it. (Best out of 10). These tests were made with decodeAsync={false}, so keep in mind that the async decoder might add some time at first run because of the Thread start overhead. iOS tests were run on an iPhone 11 Simulator, while Android tests were run on a Pixel 3a, both on the same MacBook Pro 15" i9.

| Blurhash Size | iOS | Android |

|---|---|---|

| 16 x 16 | 3 ms |

23 ms |

| 32 x 32 | 10 ms |

32 ms |

| 400 x 400 | 1.134 ms |

130 ms |

| 2000 x 2000 | 28.894ms |

1.764ms |

Values larger than 32 x 32 are only used for Benchmarking purposes, don't use them in your app! 32x32 or 16x16 is plenty!

As you can see, at higher values the Android decoder is a lot faster than the iOS decoder, but suffers at lower values. I'm not quite sure why, I'll gladly accept any pull requests which optimize the decoders.

Asynchronous Decoding

Use decodeAsync={true} to decode the Blurhash on a separate background Thread instead of the main UI-Thread. This is useful when you are experiencing stutters because of the Blurhash's decoder - e.g.: in large Lists.

Threads are re-used (iOS: DispatchQueue, Android: kotlinx Coroutines).

Caching

Previously rendered Blurhashes will get cached, so they don't re-decode on every state change, as long as the blurhash, decodeWidth, decodeHeight and decodePunch properties stay the same.