react-native-spokestack

React Native plugin for adding voice using Spokestack. This includes speech recognition, wakeword, and natural language understanding, as well as synthesizing text to speech using Spokestack voices.

Requirements

- React Native: 0.60.0+

- Android: Android SDK 21+

- iOS: iOS 13+

Installation

Using npm:

npm install --save react-native-spokestack

or using yarn:

yarn add react-native-spokestack

Then follow the instructions for each platform to link react-native-spokestack to your project:

iOS installation

iOS details

First, set your iOS deployment target in XCode to 13.0.

Edit Podfile

Before running pod install, make sure to make the following edits.

react-native-spokestack makes use of relatively new APIs only available in iOS 13+. Set the deployment target to iOS 13 at the top of your Podfile:

platform :ios, '13.0'

We also need to use use_frameworks! in our Podfile in order to support dependencies written in Swift.

target 'SpokestackExample' do

use_frameworks!

#...

For now, use_frameworks! does not work with Flipper, so we also need to disable Flipper. Remove any Flipper-related lines in your Podfile. In React Native 0.63.2, they look like this:

# X Remove or comment out these lines X

use_flipper!

post_install do |installer|

flipper_post_install(installer)

end

# XX

Remove your existing Podfile.lock and Pods folder to ensure no conflicts, then install the pods:

$ npx pod-install

Edit Info.plist

Add the following to your Info.plist to enable permissions.

<key>NSMicrophoneUsageDescription</key>

<string>This app uses the microphone to hear voice commands</string>

<key>NSSpeechRecognitionUsageDescription</key>

<string>This app uses speech recognition to process voice commands</string>

Remove Flipper

While Flipper works on fixing their pod for use_frameworks!, we must disable Flipper. We already removed the Flipper dependencies from Pods above, but there remains some code in the AppDelegate.m that imports Flipper. There are two ways to fix this.

- You can disable Flipper imports without removing any code from the AppDelegate. To do this, open your xcworkspace file in XCode. Go to your target, then Build Settings, search for "C Flags", remove

-DFB_SONARKIT_ENABLED=1from flags. - Remove all Flipper-related code from your AppDelegate.m.

In our example app, we've done option 1 and left in the Flipper code in case they get it working in the future and we can add it back.

Edit AppDelegate.m

Add AVFoundation to imports

#import <AVFoundation/AVFoundation.h>

AudioSession category

Set the AudioSession category. There are several configurations that work.

The following is a suggestion that should fit most use cases:

- (BOOL)application:(UIApplication *)application didFinishLaunchingWithOptions:(NSDictionary *)launchOptions

{

AVAudioSession *session = [AVAudioSession sharedInstance];

[session setCategory:AVAudioSessionCategoryPlayAndRecord

mode:AVAudioSessionModeDefault

options:AVAudioSessionCategoryOptionDefaultToSpeaker | AVAudioSessionCategoryOptionAllowAirPlay | AVAudioSessionCategoryOptionAllowBluetoothA2DP | AVAudioSessionCategoryOptionAllowBluetooth

error:nil];

[session setActive:YES error:nil];

// ...

Android installation

Android details

ASR Support

The example usage uses the system-provided ASRs (AndroidSpeechRecognizer and AppleSpeechRecognizer). However, AndroidSpeechRecognizer is not available on 100% of devices. If your app supports a device that doesn't have built-in speech recognition, use Spokestack ASR instead by setting the profile to a Spokestack profile using the profile prop.

See our ASR documentation for more information.

Edit root build.gradle (not app/build.gradle)

// ...

ext {

// Minimum SDK is 21

minSdkVersion = 21

// ...

dependencies {

// Minimium gradle is 3.0.1+

// The latest React Native already has this

classpath("com.android.tools.build:gradle:3.5.3")

Edit AndroidManifest.xml

Add the necessary permissions to your AndroidManifest.xml. The first permission is often there already. The second is needed for using the microphone.

<!-- For TTS -->

<uses-permission android:name="android.permission.INTERNET" />

<!-- For wakeword and ASR -->

<uses-permission android:name="android.permission.RECORD_AUDIO" />

<!-- For ensuring no downloads happen over cellular, unless forced -->

<uses-permission android:name="android.permission.ACCESS_NETWORK_STATE" />

Request RECORD_AUDIO permission

The RECORD_AUDIO permission is special in that it must be both listed in the AndroidManifest.xml as well as requested at runtime. There are a couple ways to handle this (react-native-spokestack does not do this for you):

- Recommended Add a screen to your onboarding that explains the need for the permissions used on each platform (RECORD_AUDIO on Android and Speech Recognition on iOS). Have a look at react-native-permissions to handle permissions in a more robust way.

- Request the permissions only when needed, such as when a user taps on a "listen" button. Avoid asking for permission with no context or without explaining why it is needed. In other words, we do not recommend asking for permission on app launch.

While iOS will bring up permissions dialogs automatically for any permissions needed, you must do this manually in Android.

React Native already provides a module for this. See React Native's PermissionsAndroid for more info.

Including model files in your app bundle

To include model files locally in your app (rather than downloading them from a CDN), you also need to add the necessary extensions so

the files can be included by Babel. To do this, edit your metro.config.js.

const defaults = require('metro-config/src/defaults/defaults')

module.exports = {

resolver: {

assetExts: defaults.assetExts.concat(['tflite', 'txt', 'sjson'])

}

}

Then include model files using source objects:

Spokestack.initialize(clientId, clientSecret, {

wakeword: {

filter: require('./filter.tflite'),

detect: require('./detect.tflite'),

encode: require('./encode.tflite')

},

nlu: {

model: require('./nlu.tflite'),

vocab: require('./vocab.txt'),

// Be sure not to use "json" here.

// We use a different extension (.sjson) so that the file is not

// immediately parsed as json and instead

// passes a require source object to Spokestack.

// The special extension is only necessary for local files.

metadata: require('./metadata.sjson')

}

})

This is not required. Pass remote URLs to the same config options and the files will be downloaded and cached when first calling initialize.

Contributing

See the contributing guide to learn how to contribute to the repository and the development workflow.

Usage

Get started using Spokestack, or check out our in-depth tutorials on ASR, NLU, and TTS. Also be sure to take a look at the Cookbook for quick solutions to common problems.

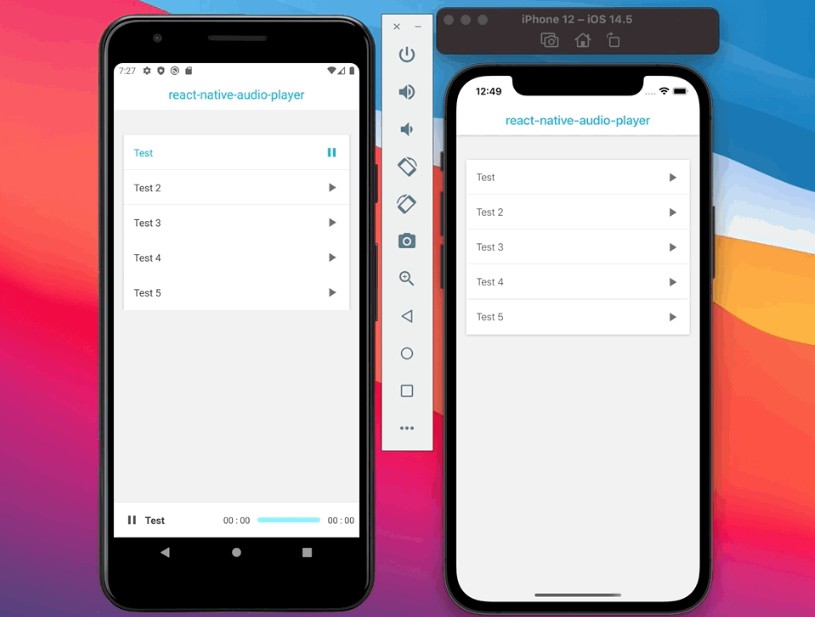

A working example app is included in this repo in the example/ folder.

import Spokestack from 'react-native-spokestack'

import { View, Button, Text } from 'react-native'

function App() {

const [listening, setListening] = useState(false)

const onActivate = () => setListening(true)

const onDeactivate = () => setListening(false)

const onRecognize = ({ transcript }) => console.log(transcript)

useEffect(() => {

Spokestack.addEventListener('activate', onActivate)

Spokestack.addEventListener('deactivate', onDeactivate)

Spokestack.addEventListener('recognize', onRecognize)

Spokestack.initialize(

process.env.SPOKESTACK_CLIENT_ID,

process.env.SPOKESTACK_CLIENT_SECRET

)

// This example starts the Spokestack pipeline immediately,

// but it could be delayed until after onboarding or other

// conditions have been met.

.then(Spokestack.start)

return () => {

Spokestack.removeAllListeners()

}

}, [])

return (

<View>

<Button onClick={() => Spokestack.activate()} title="Listen" />

<Text>{listening ? 'Listening...' : 'Idle'}</Text>

</View>

)

}

API Documentation

initialize

▸ initialize(clientId: string, clientSecret: string, config?: SpokestackConfig): Promise<void>

Defined in src/index.tsx:59

Initialize the speech pipeline; required for all other methods.

The first 2 args are your Spokestack credentials

available for free from https://spokestack.io.

Avoid hardcoding these in your app.

There are several ways to include

environment variables in your code.

Using process.env:

https://babeljs.io/docs/en/babel-plugin-transform-inline-environment-variables/

Using a local .env file ignored by git:

https://github.com/goatandsheep/react-native-dotenv

https://github.com/luggit/react-native-config

See SpokestackConfig for all available options.

import Spokestack from 'react-native-spokestack'

// ...

await Spokestack.initialize(process.env.CLIENT_ID, process.env.CLIENT_SECRET, {

pipeline: {

profile: Spokestack.PipelineProfile.PTT_NATIVE_ASR

}

})

Parameters:

| Name | Type |

|---|---|

clientId |

string |

clientSecret |

string |

config? |

SpokestackConfig |

Returns: Promise<void>

start

▸ start(): Promise<void>

Defined in src/index.tsx:77

Start the speech pipeline.

The speech pipeline starts in the deactivate state.

import Spokestack from 'react-native-spokestack`

// ...

Spokestack.initialize(process.env.CLIENT_ID, process.env.CLIENT_SECRET)

.then(Spokestack.start)

Returns: Promise<void>

stop

▸ stop(): Promise<void>

Defined in src/index.tsx:90

Stop the speech pipeline.

This effectively stops ASR, VAD, and wakeword.

import Spokestack from 'react-native-spokestack`

// ...

await Spokestack.stop()

Returns: Promise<void>

activate

▸ activate(): Promise<void>

Defined in src/index.tsx:105

Manually activate the speech pipeline.

This is necessary when using a PTT profile.

VAD profiles can also activate ASR without the need

to call this method.

import Spokestack from 'react-native-spokestack`

// ...

<Button title="Listen" onClick={() => Spokestack.activate()} />

Returns: Promise<void>

deactivate

▸ deactivate(): Promise<void>

Defined in src/index.tsx:120

Deactivate the speech pipeline.

If the profile includes wakeword, the pipeline will go back

to listening for the wakeword.

If VAD is active, the pipeline can reactivate without calling activate().

import Spokestack from 'react-native-spokestack`

// ...

<Button title="Stop listening" onClick={() => Spokestack.deactivate()} />

Returns: Promise<void>

synthesize

▸ synthesize(input: string, format?: TTSFormat, voice?: string): Promise<string>

Defined in src/index.tsx:133

Synthesize some text into speech

Returns Promise<string> with the string

being the URL for a playable mpeg.

There is currently only one free voice available ("demo-male").

const url = await Spokestack.synthesize('Hello world')

play(url)

Parameters:

| Name | Type |

|---|---|

input |

string |

format? |

TTSFormat |

voice? |

string |

Returns: Promise<string>

speak

▸ speak(input: string, format?: TTSFormat, voice?: string): Promise<void>

Defined in src/index.tsx:148

Synthesize some text into speech

and then immediately play the audio through

the default audio system.

Audio session handling can get very complex and we recommend

using a RN library focused on audio for anything more than

very simple playback.

There is currently only one free voice available ("demo-male").

await Spokestack.speak('Hello world')

Parameters:

| Name | Type |

|---|---|

input |

string |

format? |

TTSFormat |

voice? |

string |

Returns: Promise<void>

classify

▸ classify(utterance: string): Promise<SpokestackNLUResult>

Defined in src/index.tsx:163

Classify the utterance using the

intent/slot Natural Language Understanding model

passed to Spokestack.initialize().

See https://www.spokestack.io/docs/concepts/nlu for more info.

const result = await Spokestack.classify('hello')

// Here's what the result might look like,

// depending on the NLU model

console.log(result.intent) // launch

Parameters:

| Name | Type |

|---|---|

utterance |

string |

Returns: Promise<SpokestackNLUResult>

SpokestackNLUResult

intent

• intent: string

Defined in src/types.ts:101

The intent based on the match provided by the NLU model

confidence

• confidence: number

Defined in src/types.ts:103

A number from 0 to 1 representing the NLU model's confidence in the intent it recognized, where 1 represents absolute confidence.

slots

• slots: { [key:string]: SpokestackNLUSlot; }

Defined in src/types.ts:105

Data associated with the intent, provided by the NLU model

SpokestackNLUSlot

type

• type: string

Defined in src/types.ts:92

The slot's type, as defined in the model metadata

value

• value: any

Defined in src/types.ts:94

The parsed (typed) value of the slot recognized in the user utterance

rawValue

• rawValue: string

Defined in src/types.ts:96

The original string value of the slot recognized in the user utterance

addEventListener

• addEventListener: typeof addListener

Defined in src/index.tsx:203

Bind to any event emitted by the native libraries

The events are: "recognize", "partial_recognize", "error", "activate", "deactivate", and "timeout".

See the bottom of the README.md for descriptions of the events.

useEffect(() => {

const listener = Spokestack.addEventListener('recognize', onRecognize)

// Unsubsribe by calling remove when components are unmounted

return () => {

listener.remove()

}

}, [])

removeEventListener

• removeEventListener: typeof removeListener

Defined in src/index.tsx:211

Remove an event listener

Spokestack.removeEventListener('recognize', onRecognize)

removeAllListeners

• removeAllListeners: () => void

Defined in src/index.tsx:221

Remove any existing listeners

componentWillUnmount() {

Spokestack.removeAllListeners()

}

TTSFormat

• SPEECHMARKDOWN: = 2

Defined in src/types.ts:65

• SSML: = 1

Defined in src/types.ts:64

• TEXT: = 0

Defined in src/types.ts:63

Events

Use addEventListener(), removeEventListener(), and removeAllListeners() to add and remove events handlers. All events are available in both iOS and Android.

| Name | Data | Description |

|---|---|---|

| recognize | { transcript: string } |

Fired whenever speech recognition completes successfully. |

| partial_recognize | { transcript: string } |

Fired whenever the transcript changes during speech recognition. |

| timeout | null |

Fired when an active pipeline times out due to lack of recognition. |

| activate | null |

Fired when the speech pipeline activates, either through the VAD or manually. |

| deactivate | null |

Fired when the speech pipeline deactivates. |

| play | { playing: boolean } |

Fired when TTS playback starts and stops. See the speak() function. |

| error | { error: string } |

Fired when there's an error in Spokestack. |

When an error event is triggered, any existing promises are rejected as it's difficult to know exactly from where the error originated and whether it may affect other requests.

SpokestackConfig

These are the configuration options that can be passed to Spokestack.initialize(_, _, spokestackConfig). No options in SpokestackConfig are required.

SpokestackConfig has the following structure:

interface SpokestackConfig {

/**

* This option is only used when remote URLs are passed to fields such as `wakeword.filter`.

*

* Set this to true to allow downloading models over cellular.

* Note that `Spokestack.initialize()` will still reject the promise if

* models need to be downloaded but there is no network at all.

*

* Ideally, the app will include network handling itself and

* inform the user about file downloads.

*

* Default: false

*/

allowCellularDownloads?: boolean

/**

* Wakeword and NLU model files are cached internally.

* Set this to true whenever a model is changed

* during development to refresh the internal model cache.

*

* This affects models passed with `require()` as well

* as models downloaded from remote URLs.

*

* Default: false

*/

refreshModels?: boolean

/**

* This controls the log level for the underlying native

* iOS and Android libraries.

* See the TraceLevel enum for values.

*/

traceLevel?: TraceLevel

/**

* Most of these options are advanced aside from "profile"

*/

pipeline?: PipelineConfig

/** Only needed if using Spokestack.classify */

nlu?: NLUConfig

/**

* Only required for wakeword

* Most options are advanced aside from

* filter, encode, and decode for specifying config files.

*/

wakeword?: WakewordConfig

}

TraceLevel

• DEBUG: = 10

Defined in src/types.ts:50

• INFO: = 30

Defined in src/types.ts:52

• NONE: = 100

Defined in src/types.ts:53

• PERF: = 20

Defined in src/types.ts:51

PipelineConfig

profile

• Optional profile: PipelineProfile

Defined in src/types.ts:117

Profiles are collections of common configurations for Pipeline stages.

If Wakeword config files are specified, the default will be

TFLITE_WAKEWORD_NATIVE_ASR.

Otherwise, the default is PTT_NATIVE_ASR.

PipelineProfile

• PTT_NATIVE_ASR: = 2

Defined in src/types.ts:24

Apple/Android Automatic Speech Recogntion is on

when the speech pipeline is active.

This is likely the more common profile

when not using wakeword.

• PTT_SPOKESTACK_ASR: = 5

Defined in src/types.ts:42

Spokestack Automatic Speech Recogntion is on

when the speech pipeline is active.

This is likely the more common profile

when not using wakeword, but Spokestack ASR is preferred.

• TFLITE_WAKEWORD_NATIVE_ASR: = 0

Defined in src/types.ts:12

Set up wakeword and use local Apple/Android ASR.

Note that wakeword.filter, wakeword.encode, and wakeword.detect

are required if any wakeword profile is used.

• TFLITE_WAKEWORD_SPOKESTACK_ASR: = 3

Defined in src/types.ts:30

Set up wakeword and use remote Spokestack ASR.

Note that wakeword.filter, wakeword.encode, and wakeword.detect

are required if any wakeword profile is used.

• VAD_NATIVE_ASR: = 1

Defined in src/types.ts:17

Apple/Android Automatic Speech Recognition is on

when Voice Active Detection triggers it.

• VAD_SPOKESTACK_ASR: = 4

Defined in src/types.ts:35

Spokestack Automatic Speech Recognition is on

when Voice Active Detection triggers it.

sampleRate

• Optional sampleRate: number

Defined in src/types.ts:121

Audio sampling rate, in Hz

frameWidth

• Optional frameWidth: number

Defined in src/types.ts:127

advanced

Speech frame width, in ms

bufferWidth

• Optional bufferWidth: number

Defined in src/types.ts:133

advanced

Buffer width, used with frameWidth to determine the buffer size

vadMode

• Optional vadMode: "quality" | "low-bitrate" | "aggressive" | "very-aggressive"

Defined in src/types.ts:137

Voice activity detector mode

vadFallDelay

• Optional vadFallDelay: number

Defined in src/types.ts:144

advanced

Falling-edge detection run length, in ms; this value determines

how many negative samples must be received to flip the detector to negative

vadRiseDelay

• Optional vadRiseDelay: number

Defined in src/types.ts:153

advanced

Android-only

Rising-edge detection run length, in ms; this value determines

how many positive samples must be received to flip the detector to positive

ansPolicy

• Optional ansPolicy: "mild" | "medium" | "aggressive" | "very-aggressive"

Defined in src/types.ts:161

advanced

Android-only for AcousticNoiseSuppressor

Noise policy

agcCompressionGainDb

• Optional agcCompressionGainDb: number

Defined in src/types.ts:170

advanced

Android-only for AcousticGainControl

Target peak audio level, in -dB,

to maintain a peak of -9dB, configure a value of 9

agcTargetLevelDbfs

• Optional agcTargetLevelDbfs: number

Defined in src/types.ts:178

advanced

Android-only for AcousticGainControl

Dynamic range compression rate, in dBFS

NLUConfig

model

• model: string | RequireSource

Defined in src/types.ts:189

The NLU Tensorflow-Lite model. If specified, metadata and vocab are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.model: require('./nlu.tflite'))

metadata

• metadata: string | RequireSource

Defined in src/types.ts:197

The JSON file for NLU metadata. If specified, model and vocab are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.metadata: require('./metadata.json'))

vocab

• vocab: string | RequireSource

Defined in src/types.ts:205

A txt file containing the NLU vocabulary. If specified, model and metadata are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.vocab: require('./vocab.txt'))

inputLength

• Optional inputLength: number

Defined in src/types.ts:215

WakewordConfig

filter

• filter: string | RequireSource

Defined in src/types.ts:229

The "filter" Tensorflow-Lite model. If specified, detect and encode are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.filter: require('./filter.tflite'))

The filter model is used to calculate a mel spectrogram frame from the linear STFT;

its inputs should be shaped [fft-width], and its outputs [mel-width]

detect

• detect: string | RequireSource

Defined in src/types.ts:241

The "detect" Tensorflow-Lite model. If specified, filter and encode are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.detect: require('./detect.tflite'))

The encode model is used to perform each autoregressive step over the mel frames;

its inputs should be shaped [mel-length, mel-width], and its outputs [encode-width],

with an additional state input/output shaped [state-width]

encode

• encode: string | RequireSource

Defined in src/types.ts:252

The "encode" Tensorflow-Lite model. If specified, filter and detect are also required.

This field accepts 2 types of values.

- A string representing a remote URL from which to download and cache the file (presumably from a CDN).

- A source object retrieved by a

requireorimport(e.g.encode: require('./encode.tflite'))

Its inputs should be shaped [encode-length, encode-width],

and its outputs

activeMax

• Optional activeMax: number

Defined in src/types.ts:262

The maximum length of an activation, in milliseconds,

used to time out the activation

activeMin

• Optional activeMin: number

Defined in src/types.ts:257

The minimum length of an activation, in milliseconds,

used to ignore a VAD deactivation after the wakeword

encodeLength

• Optional encodeLength: number

Defined in src/types.ts:290

advanced

The length of the sliding window of encoder output

used as an input to the classifier, in milliseconds

encodeWidth

• Optional encodeWidth: number

Defined in src/types.ts:296

advanced

The size of the encoder output, in vector units

fftHopLength

• Optional fftHopLength: number

Defined in src/types.ts:340

advanced

The length of time to skip each time the

overlapping STFT is calculated, in milliseconds

fftWindowSize

• Optional fftWindowSize: number

Defined in src/types.ts:324

advanced

The size of the signal window used to calculate the STFT,

in number of samples - should be a power of 2 for maximum efficiency

fftWindowType

• Optional fftWindowType: string

Defined in src/types.ts:333

advanced

Android-only

The name of the windowing function to apply to each audio frame

before calculating the STFT; currently the "hann" window is supported

melFrameLength

• Optional melFrameLength: number

Defined in src/types.ts:354

advanced

The length of time to skip each time the

overlapping STFT is calculated, in milliseconds

melFrameWidth

• Optional melFrameWidth: number

Defined in src/types.ts:361

advanced

The size of each mel spectrogram frame,

in number of filterbank components

preEmphasis

• Optional preEmphasis: number

Defined in src/types.ts:347

advanced

The pre-emphasis filter weight to apply to

the normalized audio signal (0 for no pre-emphasis)

requestTimeout

• Optional requestTimeout: number

Defined in src/types.ts:276

iOS-only

Length of time to allow an Apple ASR request to run, in milliseconds.

Apple has an undocumented limit of 60000ms per request.

rmsAlpha

• Optional rmsAlpha: number

Defined in src/types.ts:317

advanced

The Exponentially-Weighted Moving Average (EWMA) update

rate for the current RMS signal energy (0 for no RMS normalization)

rmsTarget

• Optional rmsTarget: number

Defined in src/types.ts:310

advanced

The desired linear Root Mean Squared (RMS) signal energy,

which is used for signal normalization and should be tuned

to the RMS target used during training

stateWidth

• Optional stateWidth: number

Defined in src/types.ts:302

advanced

The size of the encoder state, in vector units (defaults to wake-encode-width)

threshold

• Optional threshold: number

Defined in src/types.ts:283

advanced

The threshold of the classifier's posterior output,

above which the trigger activates the pipeline, in the range [0, 1]

wakewords

• Optional wakewords: string

Defined in src/types.ts:269

iOS-only

A comma-separated list of wakeword keywords

Only necessary when not passing the filter, detect, and encode paths.